Milan, December 3, 2025. The atmosphere at WPC always has a special energy, but this year, for me, it felt different. It was my first WPC as a speaker.

Getting up on that stage isn’t just about sharing slides, it’s about conveying your vision. Together with my colleague and friend Laura Villa , we accepted an ambitious challenge: to condense into 50 minutes the evolution of a paradigm that is redefining modern business, “Real-Time”.

Our goal? To compare two giants of the Azure ecosystem: Databricks and Microsoft Fabric. We didn’t want to limit ourselves to a technical comparison, but to answer the question that every IT architect asks themselves today: what tool do I really need to master data speed?

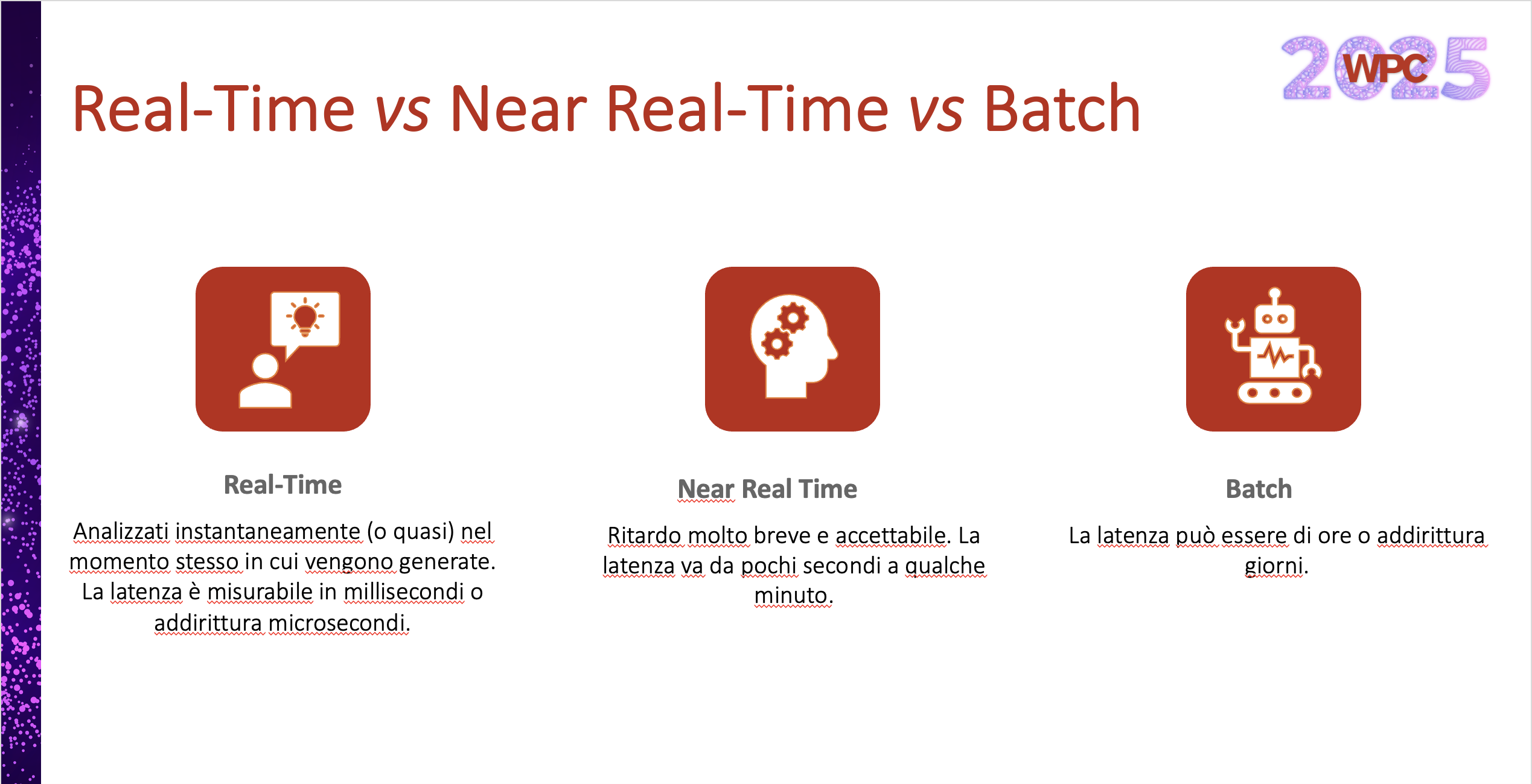

Different ways of processing data: Let’s clarify

Before talking about technologies, we need to align ourselves on the “vocabulary of data time.” The term “real-time” is often misused, but for a professional, nuances are everything.

We have identified three scenarios, because understanding latency requirements is the first step in avoiding architectural mistakes:

- Batch: Here, the data “rests.” There is no rush. We are talking about scheduled processes (daily, weekly, monthly) where latency is not a critical constraint. This is the realm of historization and consolidated reporting.

- Near Real-Time: The pace picks up. To fall into this category, processing must take place within a window ranging from a few seconds to a few minutes. This is the standard for modern operational monitoring.

- Real-Time: Keyword, Instant Processing. Here, latency is measured in milliseconds or microseconds. This is the territory where reaction speed makes the difference between a prevented failure and a stopped production line.

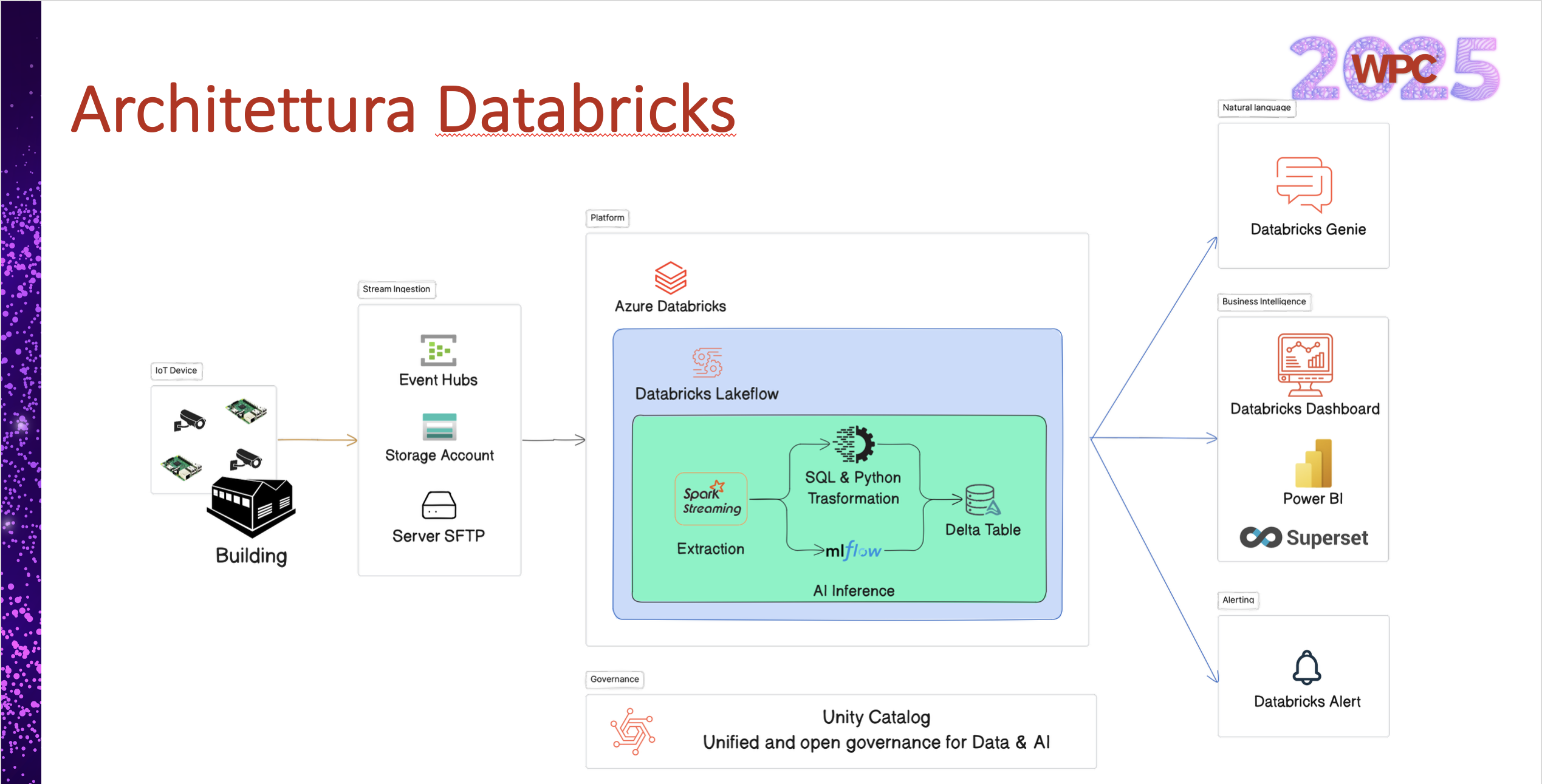

Use Case: Smart Buildings and IoT

To make the theory tangible, we brought a real-world scenario to the stage: a Smart Building. Imagine a building dotted with sensors that monitor every breath of the structure, energy production and consumption, machinery performance, security cameras, and environmental sensors.

The challenge is not only to collect data, but to transform it into decisions while the event is still happening.

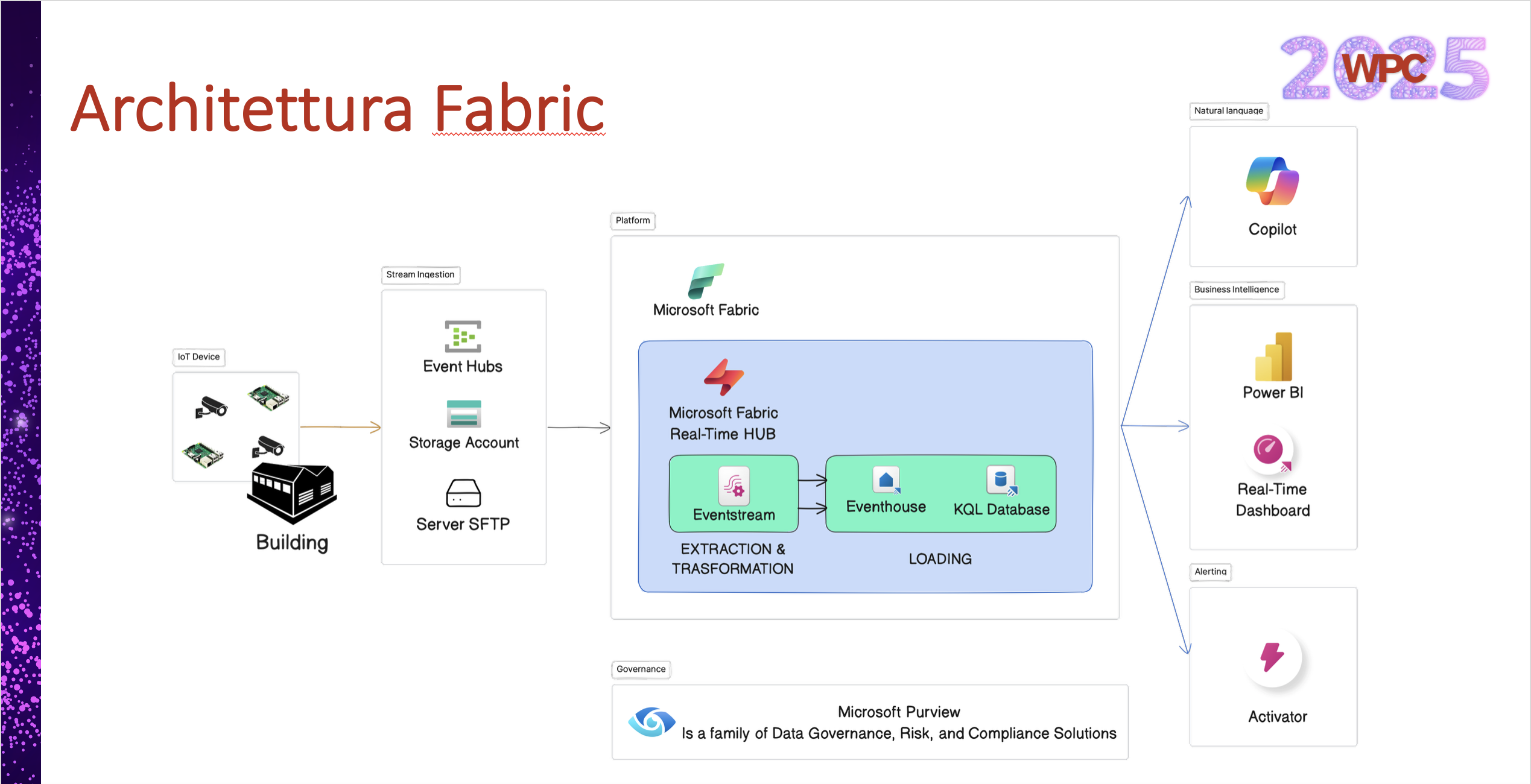

Architectural Note: For both solutions, the data sources are: Azure Event Hubs for streaming, SFTP servers, and Azure Blob Storage for unstructured data.

Azure Databricks Architecture

Microsoft Fabric Architecture

Databricks: The Power of Lakeflow

When we talk about Databricks, we talk about control and engineering power. To manage streaming, Databricks has extended the features of the Spark Declarative Framework through Lakeflow.

Lakeflow is not just a tool, it is a declarative framework that allows you to build robust and scalable pipelines (both batch and streaming) by defining transformations in SQL or Python.

Key Features

- Core Spark & Structured Streaming: Unifies batch and streaming on standard APIs. Automatic checkpoint management, native fault tolerance, and auto-scaling without the need for additional implementations.

- Declarative Orchestration: You define the what (business logic), the system handles the how (dependencies, retries, execution).

- Native Data Quality: Expectations are built into the pipeline. Data validation and observability are not an “afterthought,” they are part of the flow.

- Incremental Processing: Thanks to Streaming Tables, ingestion is continuous.

Microsoft Fabric: The certainty called Kusto

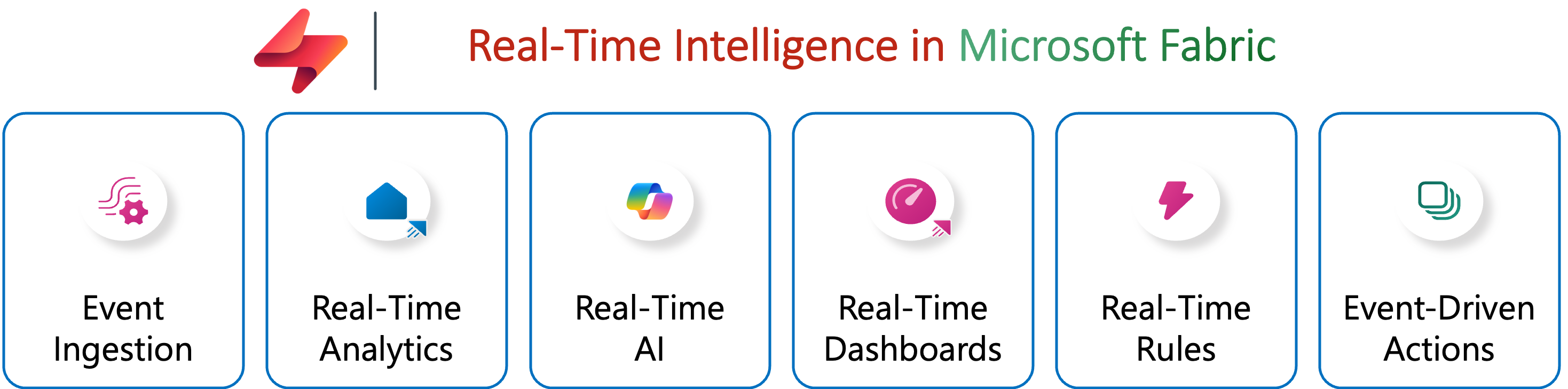

Moving on to Microsoft Fabric, the approach changes radically. Here, the watchword is integration. There is a central hub dedicated to real-time, where the beating heart is the KQL (Kusto Query Language) engine.

Fabric’s Real-Time Hub offers a unified environment. Integrated connectors, immediate visualization, and direct action on streams.

Fabric’s Real-Time Ecosystem

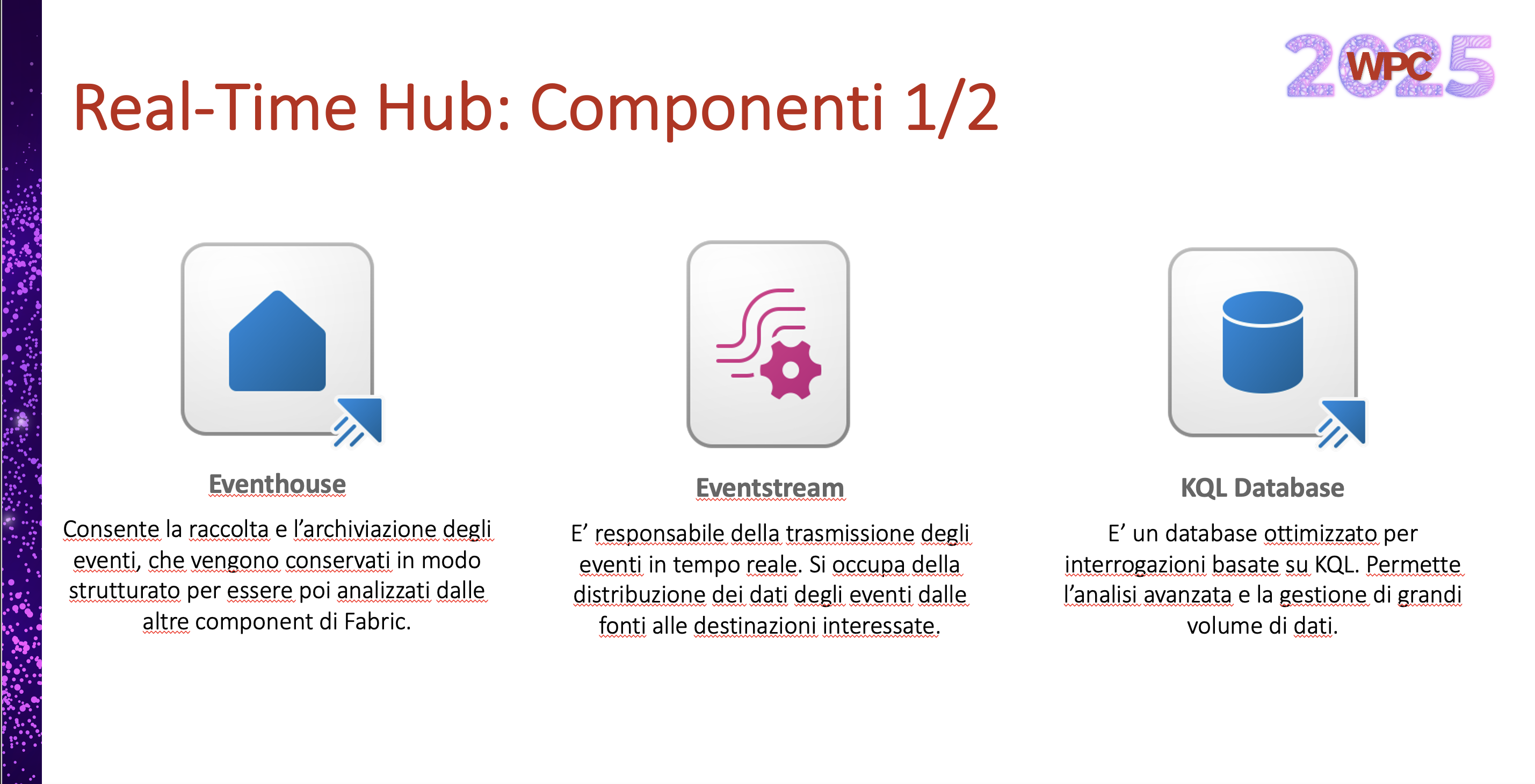

Fabric’s strength lies in the synergy of its components:

- Eventhouse: The intelligent warehouse. It collects and stores events in a structured way, making them immediately available for analysis.

- Eventstream: The nervous system. It is responsible for transmitting and distributing events from sources to destinations in real time.

- KQL Database: The engine. A database optimized for time series and lightning-fast KQL queries on huge volumes of data.

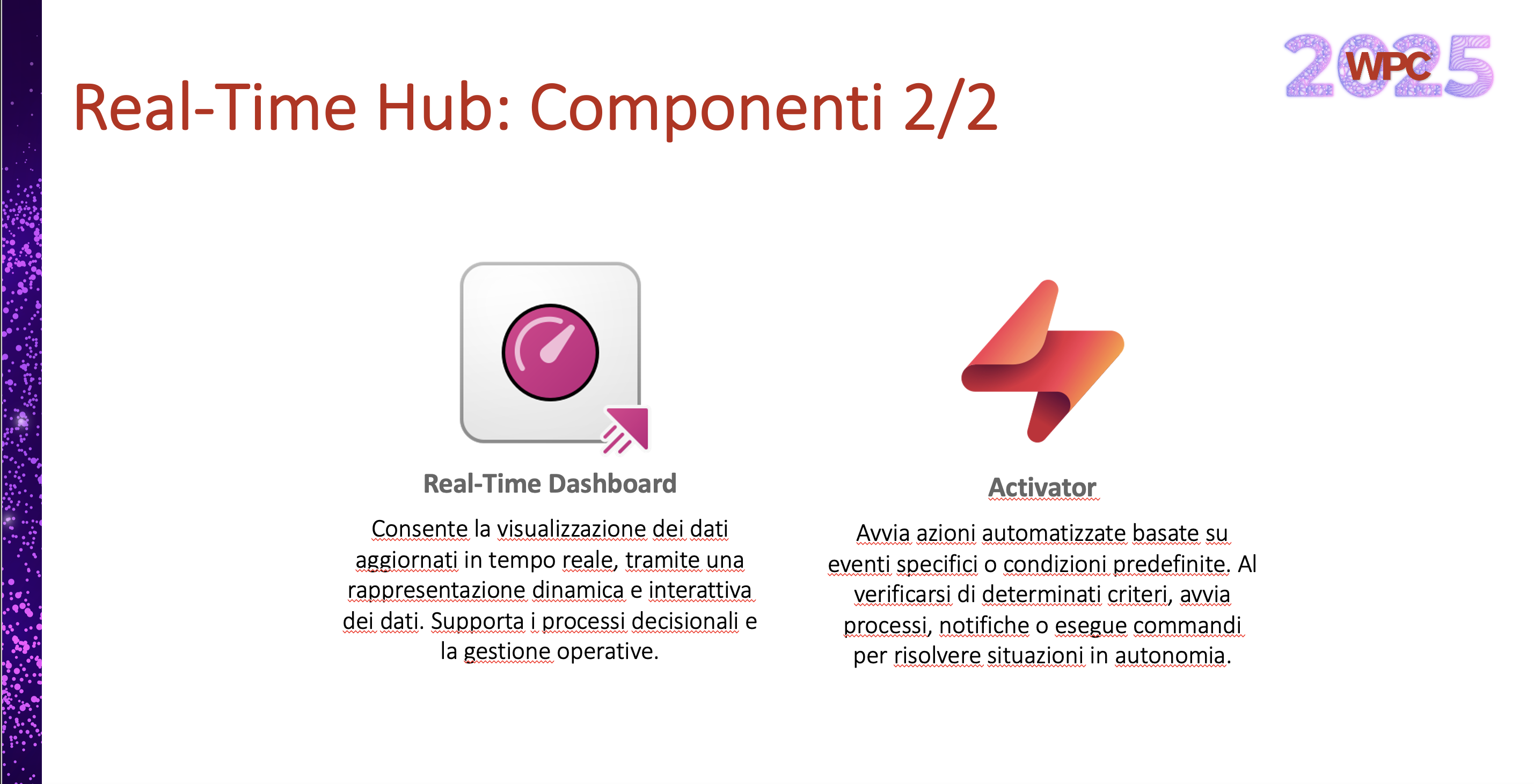

- Real-Time Dashboard: Takes dynamic visualization beyond the limits of classic BI, supporting instant operational decisions.

- Activator: The operational arm. It doesn’t just display data, it acts. It initiates processes, sends notifications, or executes automatic commands when specific conditions occur.

The Verdict: Which one should you choose?

Let’s return to the question that opened our talk at WPC: which platform is best?

The honest answer, from a professional standpoint, is: it depends on your primary objective.

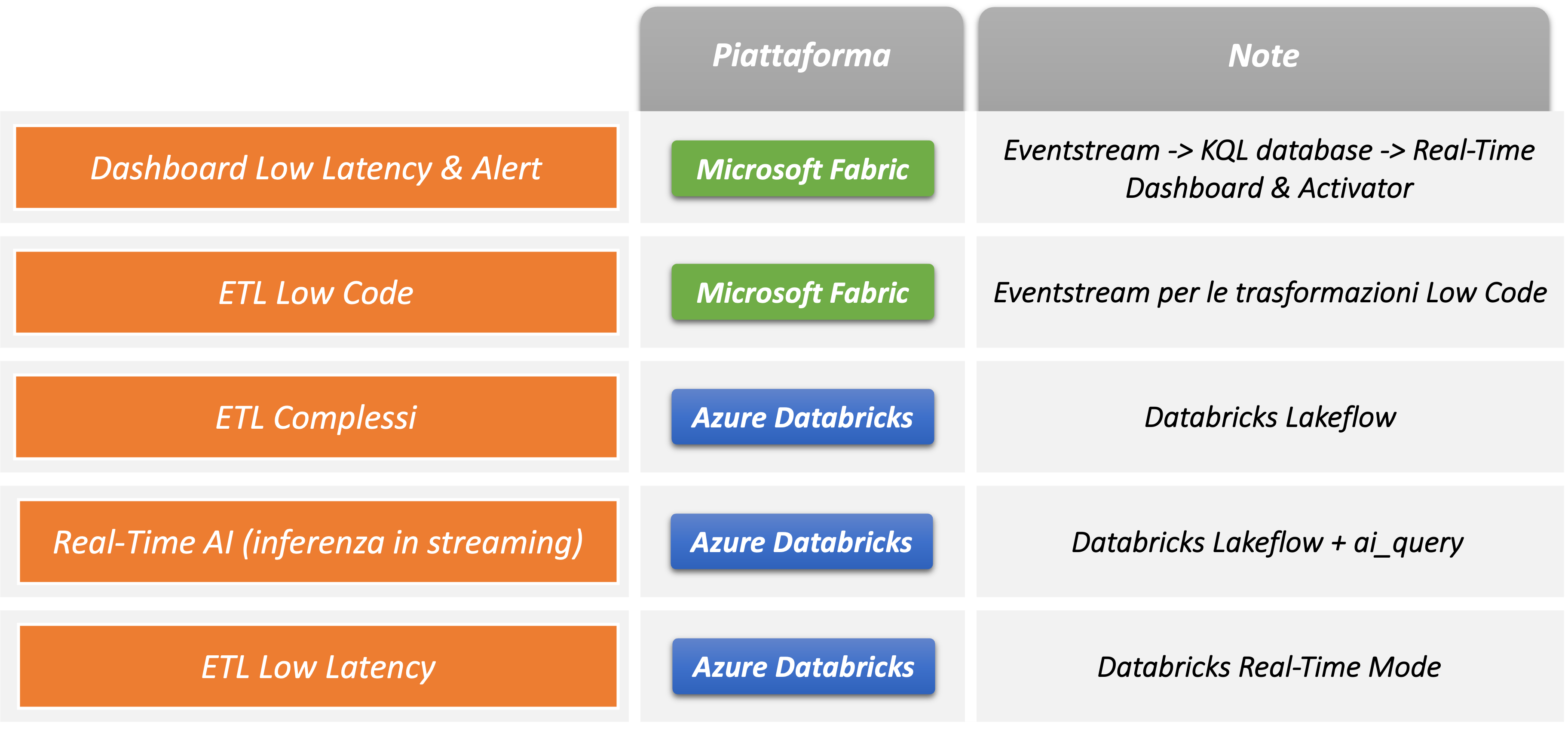

- Choose Databricks if: You are looking for maximum engineering flexibility. Based on Apache Spark, Databricks excels when you need to develop complex, customized pipelines. Furthermore, in scenarios where extreme latency is the critical factor, the new “Real-Time Mode” is an impressive demonstration of brute force.

- Choose Fabric if: You want speed of implementation and integration (Low-Code/No-Code). The Kusto engine is a cornerstone for time-series analysis with native primitives. The ability to go from raw data to a Real-Time Dashboard in just a few clicks is a huge competitive advantage for your business.

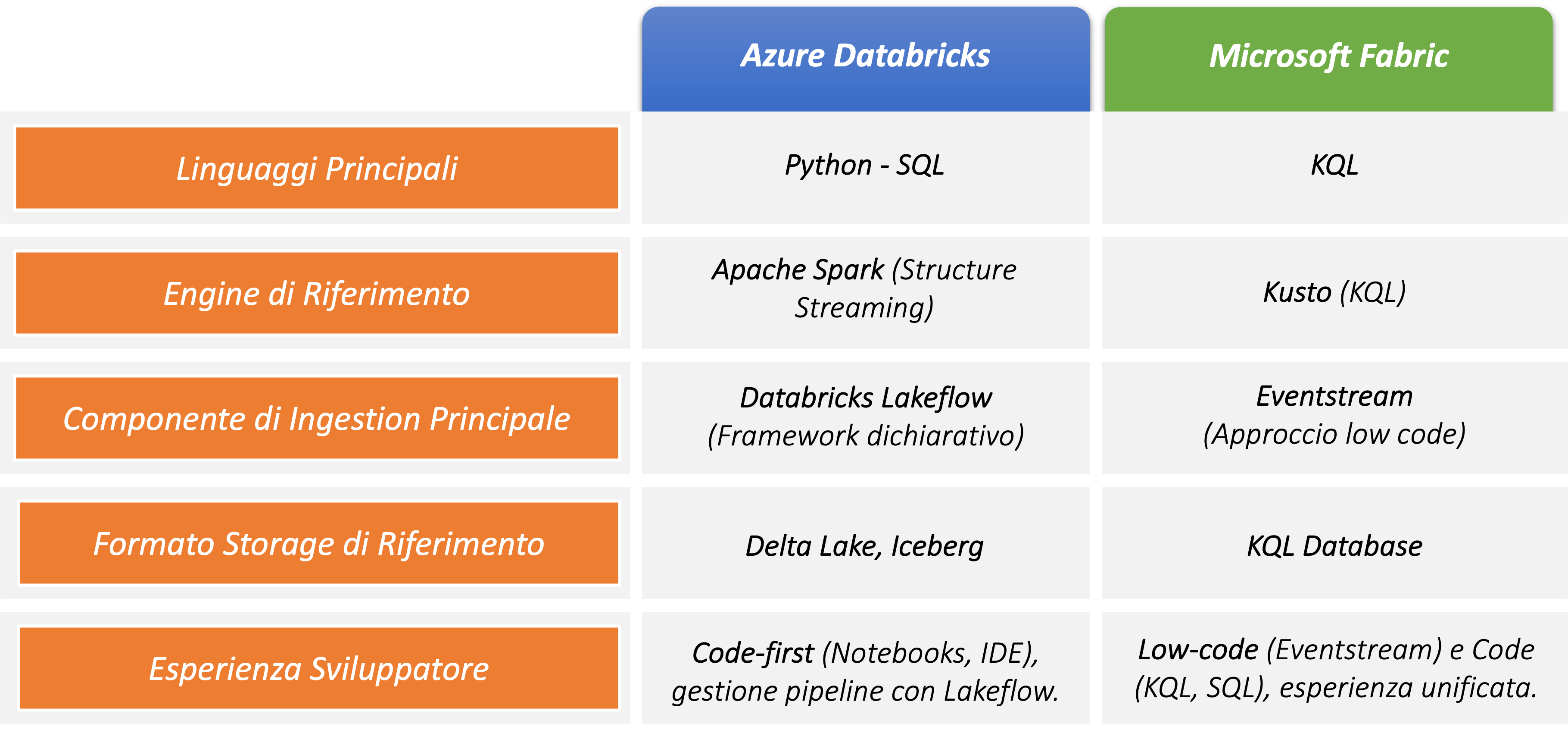

Comparison

To help you choose, we have summarized the key features and recommended use cases in these two slides:

The winning choice: Synergy

I’ll conclude with a provocative question that is also a suggestion: what if we didn’t have to choose? The best solution, budget and skills permitting, could be to adopt both platforms.

Databricks and Fabric are proving that they can work in incredible synergy. Leveraging the computing power of Spark for complex data preparation and the immediacy of Kusto and Fabric for analysis and operational action allows us to get the best of both worlds.

The WPC was an incredible experience, and the comparison between these platforms has only just begun.

What approach are you using in your real-time projects? Let’s talk about it on LinkedIn .